Get Involved With Us

Ready to shape the future of AI in media? Explore our educational programs, attend our events, or connect with fellow professionals. Your voice matters—let’s lead the conversation together.

Published On

February 10, 2026

A practical guide to our governance model, priorities, and collaboration.

The AI in Media Institute is a sector-led organisation focused on responsible, human-centred

AI adoption across media and creative industries, with a core commitment to ensuring AI is developed and deployed ethically, efficiently, and in ways that optimise long-term human and cultural outcomes.

We are not a regulator, a lobby group, or a technology vendor. Our role is to convene industry, research real-world practice, and support the co-creation of evidence-based frameworks and guidance that organisations can align around.

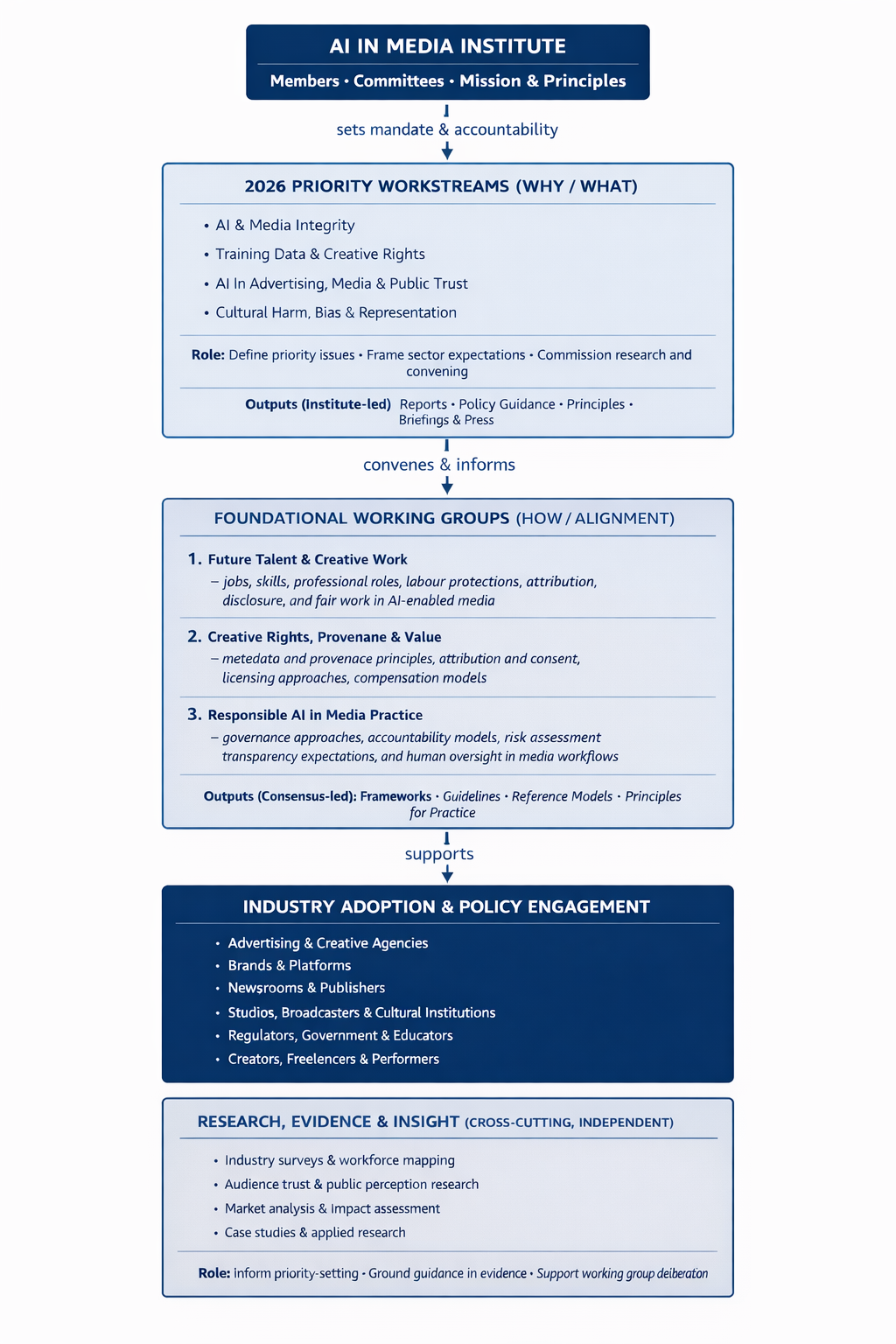

The Institute operates through a clear structure designed to balance leadership, evidence, and collaboration.

All Institute activity is guided by the principle that AI in media should be lawful, ethical, efficient, and human-beneficial by design and not retrofitted after harm occurs.

Members and committees provide legitimacy, oversight, and strategic direction. They ensure that the Institute’s work reflects the realities of professional media and creative practice.

Committees set mandates, approve programmes of work, and provide accountability.

Priority workstreams define what matters most at a given moment, particularly where rapid AI adoption presents risks to creative labour, public trust, cultural integrity, or long-term human outcomes.

They are time-bound, public-facing, and focused on urgent sector challenges.

Workstreams:

They do not enforce standards or implement systems, but they do shape shared expectations for ethical, responsible, and future-proof AI practice across the sector.

Foundational Working Groups are standing, cross-industry forums that operate through structured roundtables and working sessions.

Their role is to:

Working groups are deliberative, consensus-led, and non-regulatory, with a shared responsibility to foreground human oversight, fairness, transparency, and sustainability in AI-enabled media workflows.

Research underpins all Institute activity and operates independently.

The Institute commissions and produces:

Research may be published independently and informs both workstreams and working group deliberations.

Different types of outputs serve different purposes:

This separation ensures clarity, credibility, and speed, while maintaining ethical integrity and reducing the risk of harm, misuse, or unintended consequences as AI capabilities evolve.

Organisations and individuals can engage by:

The Institute is open to collaboration across media, technology, culture, education, and policy.

The AI in Media Institute exists to provide structure where fragmentation exists, evidence where assumption dominates, and alignment where practice is diverging.

Our model is designed to support responsible AI adoption that strengthens creativity, protects public trust, and supports sustainable creative work - ensuring AI is used efficiently, governed responsibly, and aligned with the best possible human, cultural, and societal outcomes over time.

Ready to shape the future of AI in media? Explore our educational programs, attend our events, or connect with fellow professionals. Your voice matters—let’s lead the conversation together.